The conversation about artificial intelligence and employment has long been dominated by a single, anxiety-laden question: how many jobs will be lost? It is a framing that produces dramatic headlines and unproductive fear in equal measure. It is also, increasingly, the wrong question entirely.

Gartner’s research on AI’s impact on the workforce introduces a more precise and useful lens, one that moves the discussion away from binary displacement narratives toward something richer and more consequential: the concept of ripple effects. Just as a stone dropped into still water sets off expanding, overlapping waves that disturb the surface long after the initial impact, AI’s introduction into organizational life creates cascading secondary changes in how work is structured, in what skills are valued, in how teams are assembled, and in how decisions are made.

To plan only for headcount reduction is to watch only the first ring in the water.

“The question is not whether AI will change your workforce. It will. The question is whether your organization will shape that change or be reshaped by it.”

There is a persistent temptation to frame AI’s impact on work as a straightforward substitution problem: a machine replaces a task, a task disappears, and a worker becomes redundant. This model is clean and legible and almost entirely inadequate.

In practice, AI’s effect on a role is rarely clean subtraction. More often it is a transformation, a reallocation of cognitive labour, a reorganization of what demands human attention and what does not. Routine cognitive work declines. Non-routine, judgement-intensive, and relational work becomes more prominent, not because AI cannot reach it yet, but because it is precisely where human beings continue to add distinctive value.

Consider what happens when a legal analyst gains access to an AI system that can review contracts in seconds. The analyst does not simply disappear. The role redistributes: less time on mechanical review, more on interpretation, client counsel, and complex judgement calls. The work is genuinely different, more demanding in some dimensions, less taxing in others. The ripple from that one automation touches how the team is staffed, what training becomes necessary, and even how clients perceive the firm’s value proposition.

This is ripple logic. It requires organizations to think not in terms of tasks eliminated but in terms of whole ecosystems of work being reorganized.

To bring analytical structure to what can easily become amorphous speculation, Gartner proposes a framework built on two essential variables: AI autonomy, the degree to which AI systems make and execute decisions independently, and work transformation, the extent to which the fundamental nature of the work changes, not merely the tools used to perform it.

Plotting these axes against each other yields four distinct scenarios. The important thing to understand is that these are not sequential stages on a journey from one state to another. They are concurrent realities that different functions, teams, and roles within a single organization may be experiencing at the same moment.

| SCENARIO I Humans Fill the Gaps AI assumes high autonomy over routine work. Humans perform the residual tasks AI cannot handle: judgment calls, edge cases, and relationship management. Headcount may decline in some functions, but roles do not vanish. | SCENARIO II Augmented Productivity Work itself changes little, but AI becomes a powerful instrument of amplification. Workers do the same jobs better and faster. Organizations grow without proportional headcount growth. |

| SCENARIO III Human-AI Co-Innovation Work transforms in character. Humans and AI collaborate in genuinely new ways. Roles evolve toward deep expertise, creative problem-solving, and cross-domain synthesis. | SCENARIO IV AI-First Operations AI carries the primary operational load. Human involvement concentrates on oversight, governance, strategy, and ethical accountability. Not a human-free future; a differently human one. |

What makes this framework genuinely useful and genuinely challenging is the warning embedded in it. Ripple effects can shift a function from one scenario to another without deliberate design. A team that believes it is in Scenario II, enjoying the productivity gains of augmentation, may find itself in Scenario I if AI autonomy quietly escalates and work volume fails to scale accordingly. Leadership attention is required not at the moment of initial deployment, but continuously, as systems mature and organizational context evolves.

If ripple logic holds, several structural patterns emerge with reasonable confidence across sectors and functions.

The rise of hybrid roles

The clean demarcation between technical and business competencies, already eroding over the past decade, will accelerate its dissolution. Organizations will increasingly prize individuals who can operate credibly in both registers, who understand enough about AI systems to work with them intelligently, while retaining the domain knowledge and human judgement that give that collaboration meaning. These are not replacement roles for lost jobs. They are genuinely new configurations of expertise that the pre AI organization had no reason to cultivate.

The institutionalization of AI governance

As AI systems take on more autonomous functions, the need for human expertise in overseeing those systems becomes critical rather than optional. Roles focused on AI ethics, bias auditing, model governance, and responsible deployment will expand in parallel with AI capability itself. The smarter the system, the more consequential the oversight. Organizations that treat governance as a compliance burden rather than a strategic function will pay for that choice.

The recalibration of productivity and headcount

One of the more counterintuitive findings in Gartner’s research is that productivity gains and headcount reduction do not move in lockstep. AI often delivers significant efficiency improvements—more output per worker, faster cycle times, reduced error rates—well before organizations begin to restructure their workforce. This creates a window, potentially a substantial one, in which growth and transformation can proceed together. Leaders who interpret AI adoption primarily as a licence to cut staff are likely misreading the situation and foregoing growth opportunities in the process.

“AI is not coming primarily for people. It is coming for inefficiency, for the unnecessary steps, the duplicated effort, the decisions made slowly because information was unavailable or poorly structured.”

Net job creation over the long arc

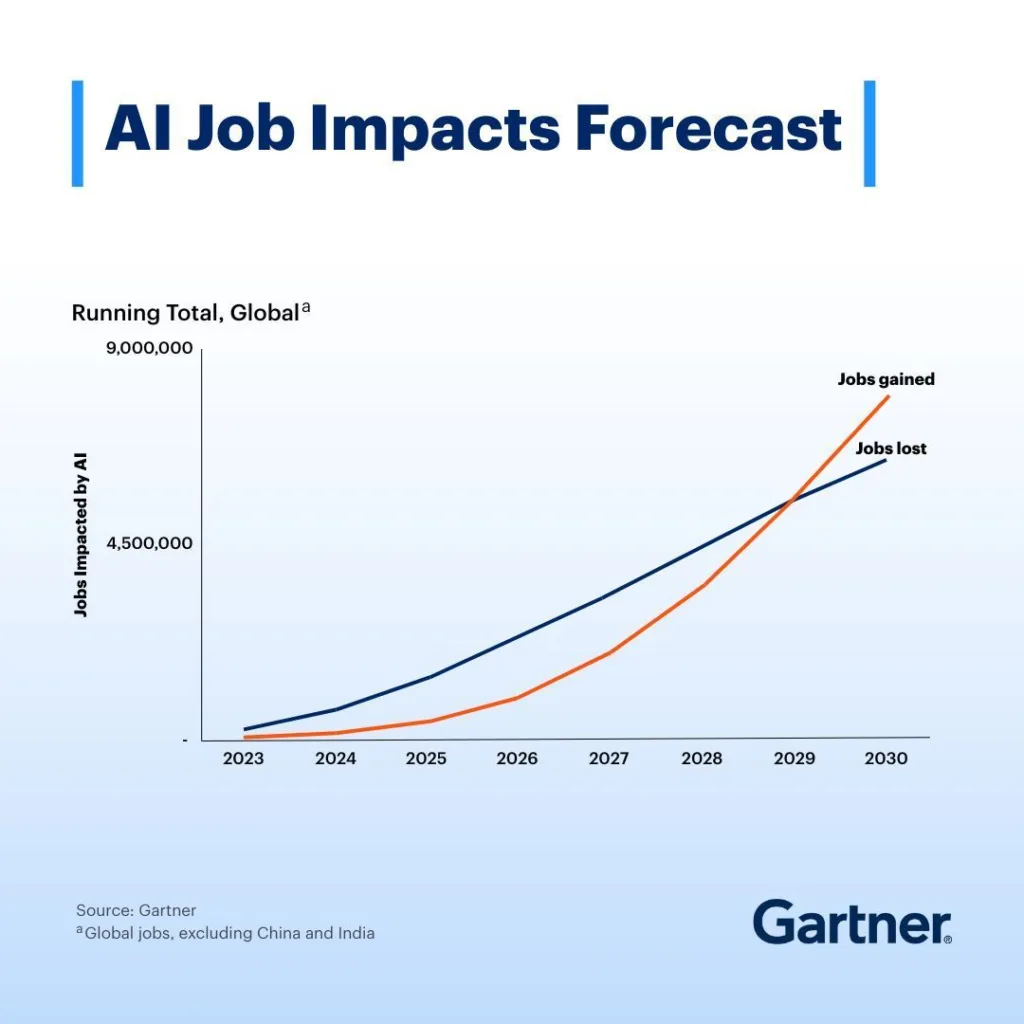

Gartner’s forecast, consistent with broader economic research on previous waves of technological transformation, suggests that AI adoption will ultimately create more jobs than it eliminates, particularly as the technology matures past the current period of acute uncertainty. The current moment — roughly through the mid-2020s — is characterized by relatively neutral net job impact. Beyond that, as new industries, business models, and roles that do not yet fully exist begin to crystallize, the balance shifts positive. This is not a counsel for complacency; the transition carries real costs and dislocations for real people. But it argues strongly against fatalism.

Understanding the conceptual landscape is necessary but insufficient. The question that ultimately matters is: what should leaders actually do?

Honest diagnosis. Before any strategy can be meaningful, organizations must map their current workforce against the four-scenario framework with specificity. Which roles are experiencing which kinds of AI integration? Where is autonomy genuinely high, and where is it aspirational? Where has work been transformed in substance, and where has only the tooling changed? The answers differ significantly across functions, and generalized workforce strategies built on generalized assumptions will be approximately wrong for most of them.

Anticipatory reskilling. The skill sets that AI renders less essential — repetitive data processing, routine pattern recognition, formulaic communication — are not the same as those it elevates. Organizations that build broad AI literacy, not as a technical curriculum but as a foundational organizational capability, are building resilience for an environment where the specific applications will keep changing but the underlying logic of human-AI collaboration will endure.

Role design as strategic work. If jobs are being reshaped by AI, the reshaping should be deliberate. The question of what a given role should look like in an AI-augmented environment—what it retains, what it delegates, what it gains—is not a question that answers itself. It requires active design thinking applied to organizational structure, not just to product or service development.

Strategic humility about pace. AI capabilities are evolving faster than organizational adaptation typically allows. Workforce plans built on today’s understanding of what AI can and cannot do will be outdated before they are fully implemented. This argues not for paralysis but for building dynamic planning processes—shorter cycles, earlier scenario reviews, greater comfort with provisional conclusions—rather than the quadrennial strategic plan followed by implementation.

There is a temptation, when confronted with AI’s expanding capabilities, to frame the human role in future work primarily in terms of what AI cannot yet do. This is defensible as a near-term lens but insufficient as a philosophy.

The more durable case for human centrality in work is not residual—not rooted in the gaps that current AI systems leave unfilled—but generative. Human beings bring to work not just cognitive processing but also moral accountability, contextual wisdom, relational intelligence, and the capacity for the kind of creative synthesis that produces genuinely new ideas rather than sophisticated recombinations of existing ones. These are not mere compensations for AI’s current limitations. They are the qualities that give AI-assisted work its meaning, its direction, and its accountability to the people it serves.

The organizations most likely to thrive in the era Gartner’s research describes are not those that optimize most aggressively for AI autonomy, nor those that resist AI adoption most strenuously. They are those that design with clarity about what they want humans in their organization to do, and build AI systems and workflows that make those humans more capable of doing it.

The ripple has already begun.

The question is not whether your organization will be touched by it but whether, when the water finally stills, the shape it finds is one you chose.

Analysis draws on Gartner research on AI workforce transformation, including the four-scenario framework for human-AI collaboration at work. Strategic Intelligence Review · March 2026

June 25, 2025 | Pallav Singh

Introduction In recent years, Indian customers across telecom, e-commerce, banking, and other sectors have faced growing frustration with support services.…

Read More

July 12, 2025 | Hemant Kumar

When the Government of India launched the Skill India Mission in 2015, it aimed at equipping the youth of India…

Read More

July 20, 2025 | Pallav Singh

Walk through any of India’s burgeoning startup hubs, be it Bangalore, Gurgaon, Hyderabad, and you’ll hear the language of the…

Read More